Hi 3.14 (pi?)

Thanks for prodding me re. github! I've been meaning to look into getting these projects on something like that for like forever. I've got Hive over on opencores.org, but the site is kinda cumbersome and kinda dead seeming as I get only maybe one doc download per day. So I signed up for a free github account and will investgate the process shortly. If you have any hints or tips I'm all ears.

Yes I calculated the coils via my spreadsheet. I also measured them and adjusted them a bit after the winding and before the I installed the leads and nail polish, though final measurement and adjustment isn't really necessary for this application. Larger diameter wire has lower DCR and less skin effect, and this helps keep Q high, though you're also dealing with radiation losses, so Q can only be so high in the completed circuit, and one quickly encounters diminishing returns. I usually aim for a coil that has coil height:diameter ratio somewhere around 1:1 to 2:1. 1:1 will provide close to ideal inductance for a given overall wire length, higher ratios give more distance between the drive end and the sense end (likely less intrinsic capacitance) though nothing in this range of ratios is all that critical, and you generally want the coil height to be a minimum of maybe 30mm or 40mm just to keep the capacitance down. I suppose I also try to not waste copper if it's not necessary to the function.

I maintain PLL lock on both the pitch and volume sides. I know what you're saying though, as analog Theremin volume sides often work as you describe, and one could conceivably build a digital Theremin that worked that way. I think FredM's idea of "upside down" circuitry was similar to this, with one frequency driving both antennas. But I've found that with sufficient frequency difference and sufficient distance separating the antennas, the interference is moot. Indeed, no Theremin would work if this wasn't the case. Phase lock gives you the highest amplitude voltage swing in all situations, and the highest selectivity and sensitivity. Not using phase lock would also introduce a strong non-linearity in the response.

========

What kind of test equipment do you have? You should have a decent scope, DMM, function generator, and LC meter. I can give you suggestions as to brands and such if you like. I have an old Tektronix TDS210, a Fluke 76, a Goldstar FG8002, and a cheap though very useful LC meter from eBay. I also have an FC-1 frequency counter that has come in handy. You can get inexpensive tiny capacitor value assortments from eBay. You can use a standard plastic breadboard for experimenting (though you have to be aware of the parasitic capacitance).

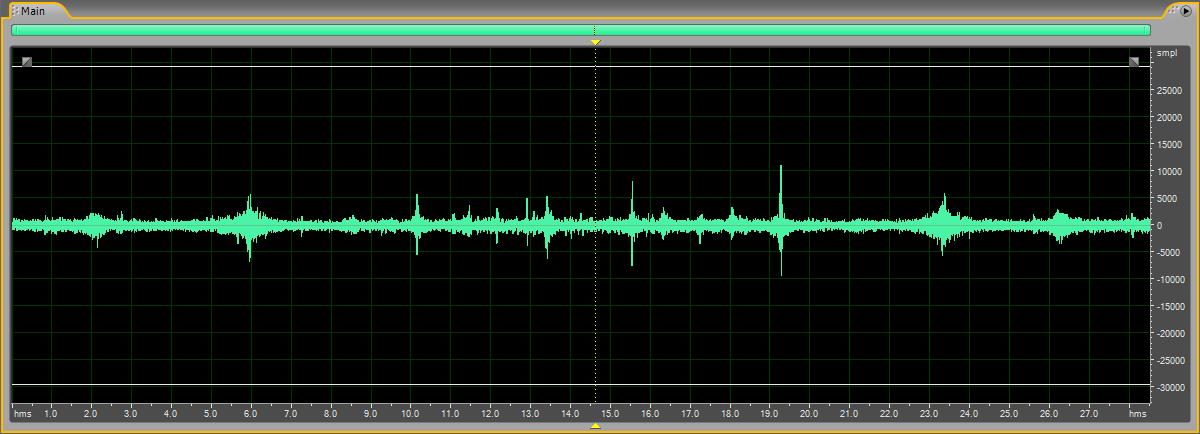

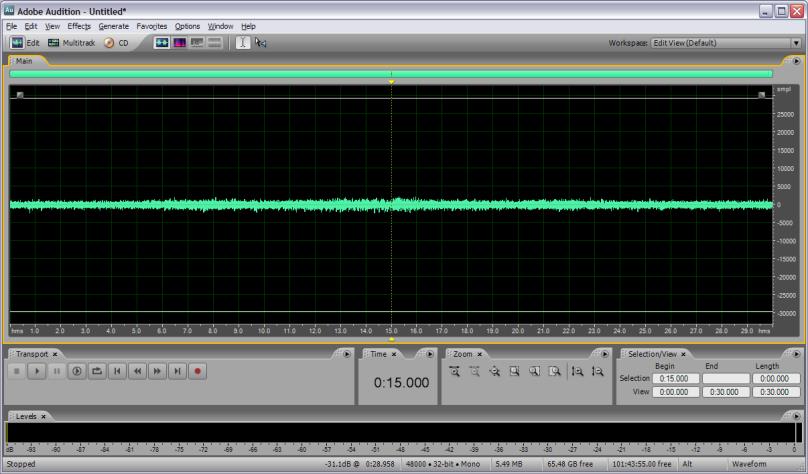

If you have a scope and a function generator, and you want to get a good intuitive feel for what is going on with the LC side of things, I can show you a simple setup that I've used quite a bit. With it you can clearly see the influence of your hand & body out past 1 meter, see the influence of mains hum, examine the relative stability of various oscillators, and quantify total Q (coil, antenna, drive, sense).